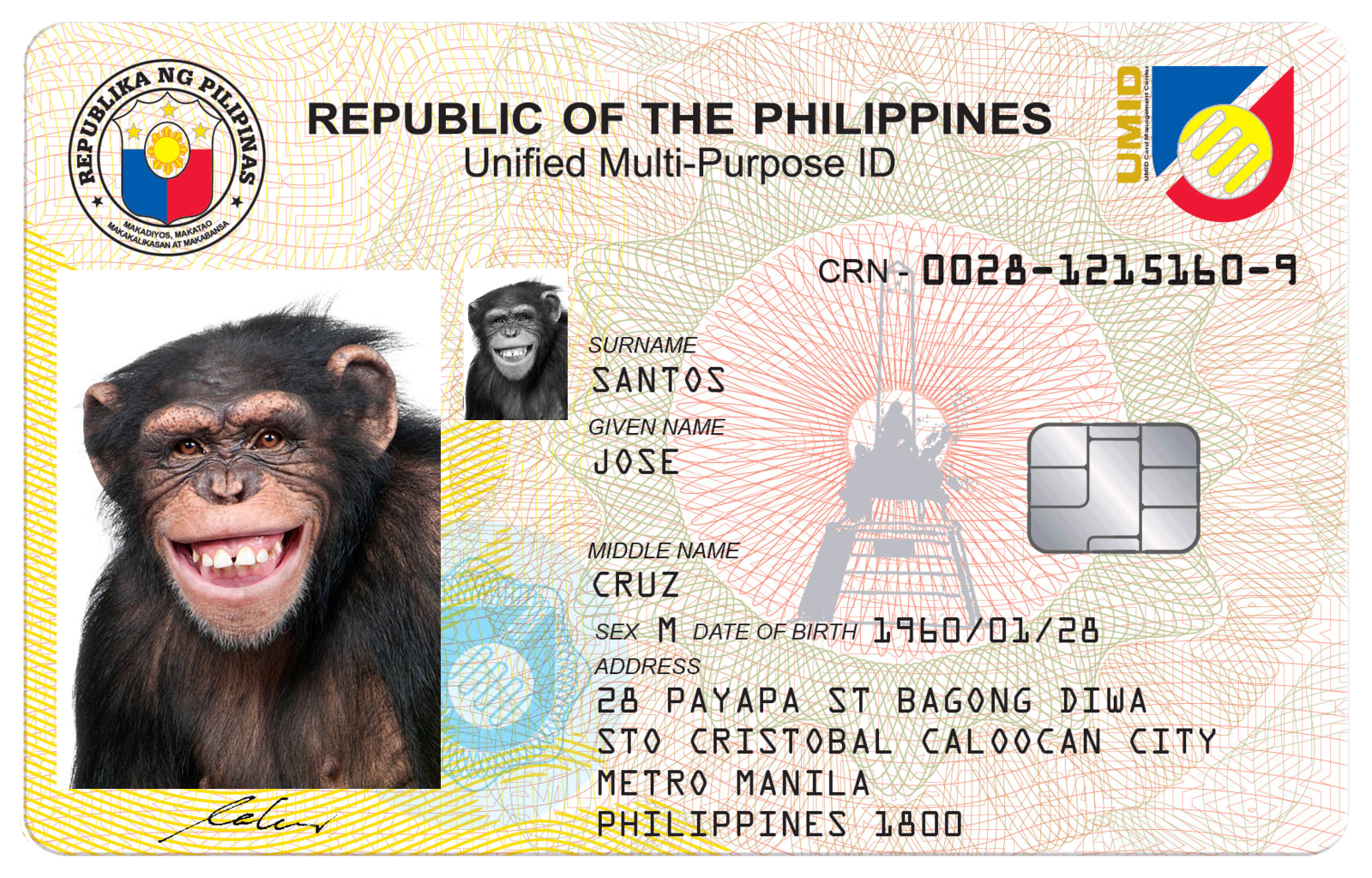

Recent events in the Philippines caused quite a lot of noise when an audit done by the National Bureau of Investigation (NBI) Cybercrime Division revealed that IDs featuring the image of a monkey could be used to register SIM cards at several major telecom companies. This vulnerability has raised concerns around the security of automated identity verification, or IDV, solutions used prove users’ identities, as required by the Philippines’ SIM Registration Act.

So what happened? How does a monkey register and order a SIM card, and what can be done to prevent this absurdity without thousands of human inspectors? In this blog, we’ll explain what led to this vulnerability and how state-of-the-art technology can provide telecom companies with accurate automated IDV solutions to streamline their business, eliminate fraud and avoid furry embarrassments.

How did a monkey register a SIM card?

On August 5th, the incident in question was revealed in a Senate hearing regarding a routine audit NBI performed as a requirement for the Philippines SIM Registration Act, which was enacted in December of last year. An extended deadline gave telecom companies until July 25th, 2023 to register users’ SIM cards in order to reduce fraud risks such as SIM swapping.

The registration process can be quickly performed online using automated IDV systems, which require users to prove their identity by uploading a photo of their government ID and providing a matching selfie. As part of the audit, the NBI was able to pass the registration process using a fake ID with an image of a monkey that matched the monkey’s selfie photo.

As automated IDV services are designed to analyze IDs and selfies to ensure they are both matching and authentic, this could have been easily prevented.

The process of registering a SIM card using a monkey’s data is a trust violation that should have been discovered twice — once during document validation, as the document could not be authentic (unless the monkey starts paying taxes), and a second time during liveness checks, as a human was not present in the selfie image captured.

Detecting fraud during document inspection

Official ID documents, such as passports, driver’s licenses and identity cards, play a crucial role in verifying individuals’ identities. However, these documents are vulnerable to spoofing and tampering attacks that allow fraudsters with fake, stolen or altered IDs to masquerade as legitimate users during registration and onboarding.

Types of IDV attacks include:

- Counterfeit attacks: Fake IDs that replicate the visual appearance, security features and data content of genuine documents can be used by fraudsters to pass inspections. This could be the toughest attack vector for fraudsters, as it requires special equipment and understanding of the specific document traits to create authentic-looking fake IDs. Of course, authenticity is a spectrum, and most of these attacks use standard card printers, with limited ability to create security features.

- Tampering attacks: These attacks involve the use of genuine ID documents that have been altered by modifying or replacing information, such as the photo, name or expiration date. The tampering can use pasting or other physical methods to alter data or use software or other digital methods to edit the image. This requires access to a legitimate ID (easy to obtain, as most fraudsters have their own) and the knowledge of which specific details must be changed, such as the image, name or other identity data.

- Presentation attacks: In these attacks, fraudsters leverage genuine IDs that have been masked or disguised to impersonate the legitimate document holder. The most common presentation attacks use screen captures of documents obtained either by social engineering or leaked data from private or government databases.

To combat these threats, advanced technology and methods for recognizing and preventing fake or non-matching IDs and selfies have become essential elements of secure registration.

How to detect spoofing and tampering attacks

Each of the attack types listed above have their weaknesses, and modern IDV solutions can detect the vast majority of these attacks. Advanced document verification technology uses optical character recognition (OCR) and image analysis to check for consistency in ID data and the presence of security features. This includes verifying fonts, holograms, watermarks and microprinting.

Using deep learning algorithms, models can be trained to recognize patterns associated with genuine IDs and flag suspicious documents based on discrepancies in image quality, the use of incorrect fonts or document templates and other visual elements. Models can be also trained on unique features of digital images, such as spatial aliasing and Moire patterns, to detect different kinds of presentation attacks.

In addition, discrepancies within the same document can reveal forged or tampered elements, as IDs often use the same data in different locations, such as visible text, barcodes, QR codes, machine readable zones (MRZ) and more.

Modern ID documents, such as biometric passports, may also include RFID or NFC chips that can be read by most mobile devices and contain secure data that can be verified against the printed information. By neglecting to replicate identity data in each of the places where it is present, fraudsters can leave clues that an ID has been tampered with.

Detecting fraud using selfie capture

By allowing users to use a unique property that is always with them — their face — to prove who they say they are, facial verification systems give users and organizations alike a convenient and secure method for remote onboarding and step-ups. When this task is performed using machine learning (ML) algorithms, which identify users based on their unique facial features, businesses can reduce or eliminate the need for human review, enabling lower operational costs and faster, more scalable verification.

However, unlike humans, who can easily distinguish human faces from other objects, ML algorithms must be trained to not only match whether two images contain the same face, but detect the presence of genuine human faces. In the case of the recent NBI audit, fake IDs were able to pass IDV checks because the system was neglecting to perform this crucial second task.

This presents a major security vulnerability where a fraudster could create a realistic counterfeit ID with a non-human face — such as a person wearing a mask, a doll or, in our case, a monkey — and present the same face for their selfie in order to pass IDV checks. As a result, they could gain access to sensitive information or devices that could result in security breaches or unintended access.

Methods to distinguish human from humanlike faces

Caught between the need for secure digital account opening and the demand for fast, scalable and automated IDV, businesses may feel like they have a tough choice to make, but they don’t need to choose between security and customer experience.

Instead, they should ensure that their IDV solution is designed to tackle the challenge of differentiating between human and humanlike faces — as we’ve developed here at Transmit Security.

When choosing a vendor, businesses should look for the use of ML models that improve verification in two key areas:

- Facial Feature Analysis: Algorithms can analyze the relative positions and proportions of facial features such as noses, eyes and mouths to identify human faces accurately and distinguish them from animal and other faces that lack the same features in the same configuration. By training ML models on extensive datasets of both human and humanlike faces, models can learn to recognize patterns and features that distinguish between the two in order to improve recognition accuracy.

- Liveness Detection: Incorporating liveness detection measures can help ensure that the subject is a live human and not a static image, doll or taxidermied animal. Techniques like blink detection and facial movement analysis can be employed to verify liveness.

While progress has been made in distinguishing between human and humanlike faces in facial verification, accurate identification can still be difficult, and facial recognition software has famously made mistakes in the past in confusing human and gorilla faces, exposing how demographic biases in AI models can lead to these errors.

As a result, it’s crucial that businesses look for IDV vendors that can not only correctly identify human faces, but ensure their AI models reduce — not amplify — harmful cultural biases.

Fast, scalable and secure identity verification with Transmit Security

As the NBI audit revealed, ML algorithms can sometimes fail at identity verification tasks that are easy for human reviewers, such as distinguishing human selfies from animal ones. However, with the right solution, presentation attacks that attempt to fool IDV systems through the use of matching humanlike selfies and ID photos can be detected, either by recognizing the forged or tampered document or during biometric authentication.

Transmit Security’s Identity Verification Services allow businesses to not only automate digital identity verification, but ensure that it is used wisely by using ML models that do not allow for commonsense errors like the ones exposed in the recent NBI telecom audit. To find out more about how our Identity Verification Services guard against presentation attacks, view our full service brief or contact Sales to set up a meeting.